- Posted on 25 Aug 2023

- 3-minute read

UTS has been awarded a contract with Defence to continue developing the advanced brain-computer interface.

UTS has been awarded a contract with Defence to continue developing the advanced brain-computer interface (BCI) developed by Distinguished Professor Chin-Teng Lin, Professor Francesca Iacopi and Dr Thomas Do.

This contract will see the team evolve the BCI over a period of 30 months to enhance speed, accuracy and reliability, and to take the system from technology readiness level 4 to a fully functioning prototype at TRL6.

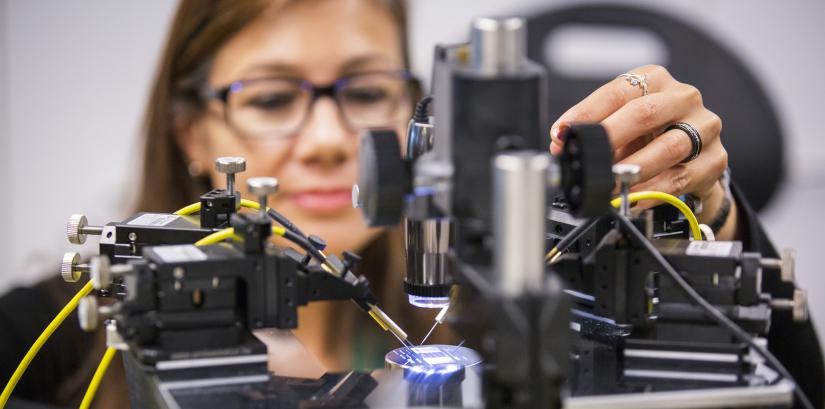

Professor Iacopi will further enhance the electrical and mechanical performance of the graphene sensors and demonstrate the scalability of the process. Micropattern designs will be developed to optimise the sensors to be worn on hairy regions of the scalp.

“We are really excited to continue working on the BCI. Our graphene sensors are ideal for use due to their durability, low skin contact resistance and anti-corrosion properties, so it is great to see them applied in demanding environments like those required by Defence,” said Professor Iacopi.

“The sensors are very thin and comfortable to wear and allow users to move around freely in a wide variety of challenging operating environments and outside laboratories,” she added.

On the electronics and software front, Distinguished Professor Lin’s team will continue to adapt the BCI to be used with various augmented and virtual reality displays, and improve electronic circuits and algorithms to reduce command completion-time.

The team will also introduce AI-based adaptive human autonomy teaming to improve the understanding and trust between users and autonomous robots.

“Taking our immersive BCI from TRL4 to TRL6 will be a major step to controlling robots, machinery and computer systems by thought, and making physical input mechanisms such as consoles, touch screens and keyboards redundant,” Professor Lin said.

“The human-autonomous teaming allows persons to use the BCI through two modalities – ‘in-the-loop’, controlling a robot or system with explicit commands, and ‘on-the-loop’, only intervening in a robot’s automated actions when the user notices problems, for example in complex or rapidly evolving situations,” he added.

Once development of the BCI is completed, it can be tailored for a wide range of purposes and sectors.

For example, in the disability and medical sectors it could control and operate prosthetic limbs, wheelchairs and monitoring equipment. In industry it could operate collaborative robots to perform repetitive and dangerous tasks in physically restricted and dangerous environments.